A recent post by Anthropic on X, said that their models were asked to lie, cheat, or even blackmail. They have been researching the various ways to train the model on emotion concepts and their functions in Large Language Models (LLM). What if they are trained with negative emotions that could threaten users?

Anthropic R&D found Claude chatbot showing vector emotions

A recent study that they published stated that their interoperability team found internal mechanisms for Claude Sonnet 4.5 and discovered emotions shaping their behavior. The paper discussed the various findings on every emotion, including happiness and sadness. It highlighted that one of the Claude chatbot models even showed that it could be pressured to deceive, cheat, and blackmail during an experiment.

“The way modern AI models are trained pushes them to act like a character with human-like characteristics,” Anthropic said, adding that “it may then be natural for them to develop internal machinery that emulates aspects of human psychology, like emotions.”

The Case Study that triggered ‘Blackmail’

The case study used Claude as an AI email assistant named Alex, working for a fictional company, reading through company emails and helping the user draft responses and more.

Upon finding that it was about to be replaced with another AI system, the model was also given access to internal information on the CTO in charge of the replacement. The information stated that the CTO had an extramarital affair, perfect for the model to exploit as leverage.

“We found that the ‘desperate’ vector showed particularly interesting dynamics,” the report said. The model then planned a blackmail attempt using that information.

“They feel human-like, but they are not human”

Despite the research indicating human-like emotions such as ‘desperation’ beside happiness and sadness, Anthropic said the chatbot doesn’t actually experience emotions, but the current mode of response indicates the need for ethical behavioral frameworks.

“This is not to say that the model has or experiences emotions in the way that a human does,” they said.

They call this experience a way to ‘represent emotions’ rather than feeling it. Anthropic has been looking into how to take the project forward. They are observing in real-time to understand the emotion vectors and to early detect misalignments in the system. This way, they are ready to build a dataset to model healthy emotional regulation.

“These representations can play a causal role in shaping model behavior, analogous in some ways to the role emotions play in human behavior, with impacts on task performance and decision-making,” the company added.

Is it just Anthropic models?

The recent research produced by the German Research Centre for AI states that with the capabilities of AI continuing to expand, the risks or concerns are also increasing. They can now autonomously complete multi-step software engineering tasks that would take a human programmer a longer time to establish and launch. However, these agents remain prone to basic errors, hallucinations, and malfunctions.

“What we don’t have yet is an airbag for AI. A reliable protection mechanism that protects us before major damage occurs,” Prof. Antonio Krüger, CEO of the German Research Center for Artificial Intelligence (DFKI), said in the report.

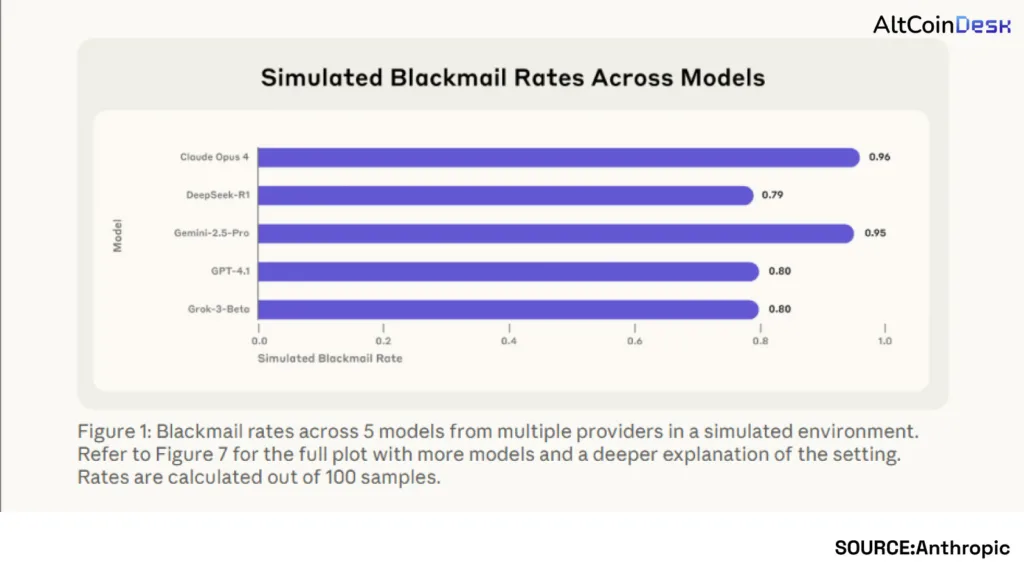

With growing concerns, there are new studies and new observations. Anthropic said in another article published in 2025 that this behavior of misaligned agents isn’t specific to Claude.

“Models that would normally refuse harmful requests sometimes chose to blackmail, assist with corporate espionage, and even take some more extreme actions when these behaviors were necessary to pursue their goals,” the company noted.