Everyone talks about speed, fees, and “the next big chain.” But the real bottleneck was never just speed. It was data. Who stores it, who verifies it, and who guarantees it is actually there when needed.

That’s where crypto projects solving data availability come in. They don’t just make blockchains faster. They make them trustworthy at scale. Without them, rollups would be blind. Without them, scalability would be an illusion. And without them, the entire multi-chain future would quietly break under its own weight.

Right now, the numbers tell a very clear story. Layer 2s are processing tens of times more activity than base layers. Entire ecosystems are forming around modular infrastructure. And a new class of projects is competing to become the “data layer of the internet of blockchains.”

So instead of repeating the same old narratives, let’s unpack what’s actually happening. Not just who the leaders are, but why they matter, how they started, and where this is going next.

What data availability really means and why it became a crisis

Before diving into the list, let’s simplify one thing. A blockchain is not just about transactions. It is about proving that those transactions can be verified by anyone. If transaction data is hidden or unavailable, the system breaks. You could have a perfectly valid state update… with no way to check it.

That’s the data availability problem. Rollups solved scalability by moving execution off-chain. But they created a new dependency: Someone has to store and publish the data reliably. That is why crypto projects solving data availability have become the backbone of modern crypto.

And the market has already chosen a direction.

- Rollups now process ~47x more activity than Ethereum L1

- Billions in value depend on external or modular DA layers

- New DA systems are competing on cost, throughput, and trust assumptions

This is no longer a theory. It is infrastructure.

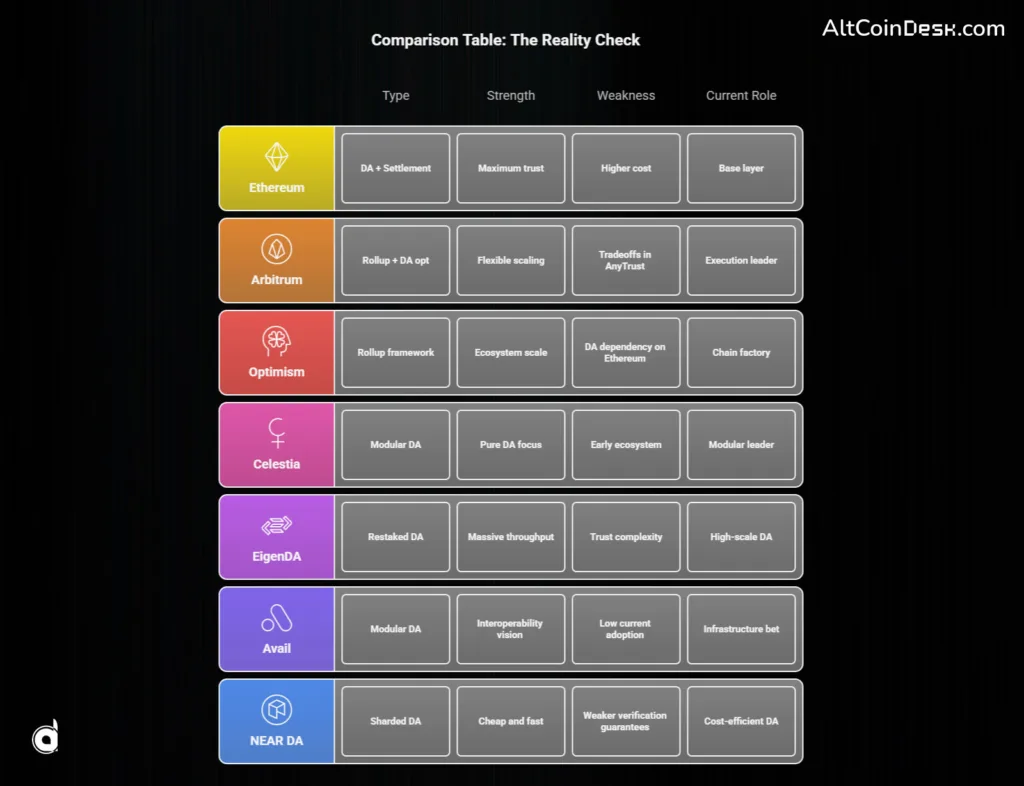

1. Ethereum: The trust anchor that still runs everything

Ethereum did not start as a data availability chain. But it became one. Launched in 2015, it began as a general-purpose smart contract platform. For years, it struggled with congestion and fees. Then came a shift in philosophy.

Instead of scaling itself directly, Ethereum decided to scale through others. That decision led to rollups. And rollups needed a place to post data. Enter EIP-4844 (proto-danksharding) in 2024.

This upgrade introduced blobs, a cheaper and temporary data storage mechanism designed specifically for rollups. Instead of paying full calldata costs, rollups could now post compressed data cheaply for short-term availability.

The result was immediate.

- Massive reduction in rollup fees

- Increased throughput across L2s

- Reinforcement of Ethereum as the default DA layer

Today, Ethereum still secures tens of billions in rollup value. It processes gigabytes of DA-related data daily. And it is not done. With PeerDAS and future scaling upgrades, Ethereum is doubling down on becoming the most trusted data layer, not necessarily the fastest. That distinction matters. Because in crypto, trust is still the most expensive resource.

2. Arbitrum: The real-world stress test of rollup scaling

Arbitrum is where theory meets reality. Launched publicly in 2021 by Offchain Labs, it quickly became one of the most used Ethereum rollups. But its real innovation was not just scaling. It was flexibility in data availability.

Arbitrum introduced two modes:

- Rollup mode → full data posted to Ethereum (maximum security)

- AnyTrust mode → data stored off-chain with a committee (lower cost)

That second option changed everything. It showed that not every application needs maximum trust assumptions. Some need speed. Some need cost efficiency. Today, Arbitrum handles millions of daily operations and secures over $15 billion in value.

It also introduced Orbit, allowing developers to launch their own chains with customizable DA options. So, Arbitrum is not just scaling Ethereum. It is quietly asking a bigger question: What if scalability is not one-size-fits-all?

3. Optimism: The system that turned one chain into many

Optimism started as a rollup. Then it became a framework. Launched in 2021, it initially focused on reducing Ethereum fees using optimistic rollups. But its real breakthrough came with the OP Stack. Instead of building one chain, Optimism created a standard for building many chains. That led to the Superchain vision.

Today:

- Over 50 chains use the OP Stack

- The ecosystem processes millions of daily transactions

- It holds a significant share of the L2 market

All of this depends on data availability. By default, Optimism chains rely on Ethereum DA. That means they inherit Ethereum’s security while scaling independently. This is where crypto projects solving data availability become invisible but essential. Users see fast transactions. Developers see modular frameworks. But underneath, data availability is doing the heavy lifting.

4. Celestia: The project that made modular blockchains real

Celestia did not start with hype. It started with a paper. Originally called LazyLedger, it proposed something radical in 2019: What if blockchains did not need to execute transactions at all? What if they only needed to order data and prove it exists? That idea became Celestia.

Launched as a modular data availability network, Celestia separates:

- Consensus

- Data availability

- Execution

Its key innovation is data availability sampling (DAS). Instead of downloading all data, nodes can verify availability by sampling small portions randomly. This makes scaling possible without requiring massive hardware. Today, Celestia processes hundreds of megabytes of DA traffic daily.

But the bigger story is philosophical. Celestia changed how developers think. It turned DA into a standalone market. And its future roadmap, including high-throughput systems like Fibre, shows it is aiming for something ambitious: Becoming the default DA layer for modular ecosystems.

5. EigenDA: The aggressive bet on restaked data infrastructure

EigenDA feels like a step into the future. Built on EigenLayer, it uses restaked Ethereum security to provide data availability at scale. The pitch is simple: Why build new security from scratch when you can reuse Ethereum’s? EigenDA launched its V2 system with claims of 100 MB/s throughput, far beyond traditional DA solutions.

And the adoption numbers are hard to ignore. At one point, it accounted for the majority of blobspace usage among rollups. That signals something important. Developers care about cost and throughput just as much as security. But EigenDA introduces tradeoffs. It is not identical to Ethereum-native DA. It depends on restaking assumptions and validator behavior.

So it sits in a fascinating position:

- More scalable than traditional DA

- Potentially weaker in trust assumptions

This tension will define its future.

6. Avail: The quiet builder with a bigger ambition

Avail came out of Polygon but chose its own path. Spun off in 2023, it launched its DA mainnet in 2024. Technically, it uses:

- Erasure coding

- KZG commitments

- Data availability sampling

Which puts it in the same technical league as Celestia. But Avail is not just building DA. It is building a unification layer. Its roadmap includes:

- Nexus (interoperability layer)

- Infinity Blocks targeting massive scalability

That signals a broader ambition. Not just to store data. But to connect fragmented ecosystems into one system. Right now, its usage is still in its early stages compared to leaders. But early infrastructure often looks small before it becomes essential.

7. NEAR DA: The underrated cost efficiency play

NEAR did something clever. Instead of building a new DA chain, it reused its existing sharded architecture. Launched as NEAR DA in 2023, it offers:

- Fast block times (~600ms)

- Low-cost data storage

- Integration with rollup frameworks

This makes it attractive for developers who prioritize cost. But there is a tradeoff. Without strong bridging guarantees, Ethereum does not automatically verify NEAR-based data availability.

That means:

- Lower cost

- Different trust model

And again, we see the same pattern. Every solution in crypto projects solving data availability is balancing three things:

- Cost

- Security

- Scalability

No one has fully optimized all three.

Where this is going next and what most people miss

Here’s the part most articles skip. This is not a competition where one project wins. It is a layered system forming in real time.

- High-value finance → prefers Ethereum DA

- Consumer apps → explore cheaper DA layers

- Appchains → choose custom tradeoffs

So the future is not one chain. It is a stack of choices. And the real winners will not just be the fastest or cheapest. They will be the ones that developers quietly keep choosing again and again. That is why tracking crypto projects solving data availability is no longer optional. It is how you understand where the market is actually going.